There’s a specific kind of dread that project managers and L&D leaders carry quietly into the beginning of every new engagement. Not the obvious kind – not the fear that people aren’t talented, or that the training wasn’t good enough. It’s subtler than that. It’s the dread of not really knowing. Of sending people into real work, on real timelines, with real client expectations, based on a set of numbers that looked fine on a dashboard – and hoping the numbers were telling the truth.

Most of the time, nobody says this out loud. You run the training. People complete it. Scores come in. Certifications go out. The readiness factor seems fine, and the project kicks off. And yet the project slipped. The client pushed back. The senior engineers were pulled in again. And somewhere in that room, someone finally said the thing nobody had been saying out loud:

“Were they actually ready? Or did we just assume they were?”

That question is the most expensive unanswered question in enterprise talent. And for years, the certification was the answer everyone agreed to trust – not because it was right, but because nothing better existed.

Until EASE – Engine for AI-Based Skill Validation did.

And in 2026, as AI-driven workflows have become standard operating procedure and the cost of a skill gap has moved from “inconvenient” to “commercially damaging,” that distinction matters more than it ever has. In an environment where true AI-Powered Skill Assessment is finally possible, the old reliance on certifications is no longer defensible.

The Certification Was Never the Problem. The Trust Was.

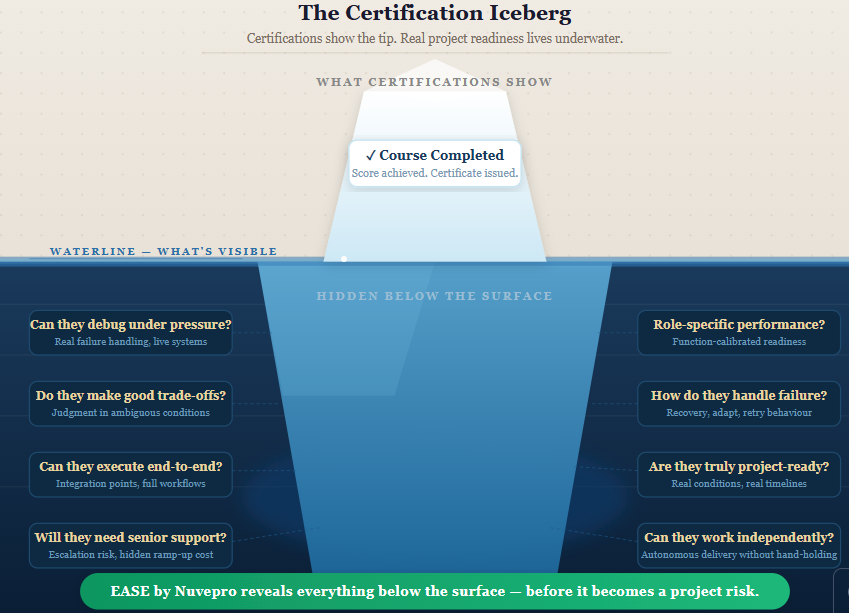

To be clear, certifications aren’t the villain here. They were built to do something specific: confirm that a professional had been exposed to a body of knowledge and could demonstrate recall of it under test conditions. For that purpose, they work.

The problem is what enterprises did next. They took that confirmation of exposure and treated it as proof of readiness. They looked at a certified team and said, “we’re good to go” and sent them into live projects, real client environments, and high-stakes delivery situations based on that assumption.

That leap from “certified” to “ready” is where the lie lives. Not in the certification itself. In the trust that was placed in what a certification was never designed to guarantee.

A 2025 IBM Institute for Business Value report found that 87% of enterprise leaders believe their workforce lacks the skills needed to execute on current technology priorities. That number would be alarming enough on its own. What makes it worse is that the same organisations reporting this gap also have active training programs in place. The learning is happening. The readiness isn’t following.

LinkedIn’s 2025 Workplace Learning Report reinforced the same theme: while L&D investment globally has continued to rise, only 36% of business leaders say they can confidently measure whether that learning translates into actual job performance. The rest are operating on assumption.

McKinsey’s research, updated through early 2026, puts a price on that assumption. Organisations that deploy people before their skills are genuinely validated see project timelines extend by an average of 23% and escalation rates among senior engineers increase significantly – often masking the problem rather than surfacing it.

The problem isn’t that enterprises aren’t investing in skilling. They are. The problem is that traditional technical skill assessments were never built to answer the question that actually matters for deployment decisions: can this person handle real project conditions? That’s the gap that AI-powered assessments are now purpose-built to close.

What Traditional Assessments Were Actually Measuring

To understand why the gap exists, it helps to understand what traditional assessments were designed for - and what they were never designed to do.

For decades, the enterprise skilling model followed a predictable arc. A professional completed a structured learning path, took a test against that content, received a score, and earned a certification. The process was clean, scalable, and easy to report upward. It was also, fundamentally, a measure of recall – not capability.

The environments were controlled. Tools were restricted. Complex, multi-step workflows were broken into isolated fragments. Integration points – the places where real project complexity actually lives – were removed entirely to make scoring tractable. And at the end, the output was a single number that represented performance in those controlled conditions.

Real work doesn’t look like that.

In real project environments, a developer doesn’t answer a question about database configuration – they configure a live database, discover that a dependency hasn’t been set up correctly, make a judgment call about how to proceed, hit a failure, debug it, and adapt. The skill that matters isn’t knowledge of the concept. It’s the ability to execute, recover, and think when the environment doesn’t cooperate.

Traditional skill validation assessments weren’t capturing any of that. They were measuring the map, not the terrain. What enterprises actually needed and what AI-based skill evaluation and AI-Powered Skill Assessment now deliver is a way to measure the journey, not just the destination.

The Specific Moment Enterprises Started Paying Attention

The shift in how enterprises think about readiness didn’t happen all at once. It accumulated across a series of project post-mortems and honest conversations that gradually forced the question into the open.

“We were passing people who weren’t ready” is a phrase that shows up, in different forms, in nearly every enterprise that has seriously examined its skilling outcomes. Sometimes it came through client feedback. Sometimes it came from delivery managers who’d quietly started factoring in a “ramp-up buffer” for people who were officially certified. Sometimes it came from L&D leaders who looked at completion data and project performance data side by side and realised the correlation just wasn’t there.

By 2025, Gartner was reporting that 64% of HR leaders identified closing the skill gap as their top priority – but also acknowledged that most existing skill assessment platforms couldn’t reliably differentiate between someone who had learned a skill and someone who could apply it under pressure. That’s a significant problem when the decisions those assessments are informing involve project staffing, client delivery, and revenue.

The question enterprises started asking wasn’t “how do we make assessments harder?” It was more fundamental than that: how do we make assessments real? The answer that has emerged is AI-Driven skill evaluation moving from what people can recall to what they can actually do.

Enter Nuvepro’s EASE - Engine for AI-Based Skill Validation, a Fundamentally Different Approach

EASE - Engine for AI-Based Skill Validation is the intelligence layer that sits at the core of Nuvepro’s skill validation assessments. It doesn’t tweak the traditional model. It replaces the logic of it entirely.

The starting point is the environment. Rather than placing learners in restricted sandboxes with simplified configurations, EASE – Engine for AI-Based Skill Validation deploys them into environments that behave like real project scenarios – live configurations, real integration points, end-to-end workflows that don’t skip the messy parts. These are AI-powered assessments in the truest sense: learners aren’t choosing answers. They’re doing actual work.

But the more significant shift is in how evaluation happens.

Traditional automated scoring looks at outputs: did the final answer match the expected answer? Nuvepro’s EASE ( Engine for AI-Based Skill Validation) looks at the entire execution process – the sequence of actions, the decisions made mid-task, how the learner handled failure, whether they debugged intelligently or restarted from scratch, whether they made trade-offs that reflect genuine understanding or just got lucky with a path that happened to work.

In 2026, with AI evaluation models now capable of contextual reasoning at scale, this kind of continuous process analysis is no longer just theoretically possible it’s operationally viable across large learner populations. EASE – Engine for AI-Based Skill Validation applies that capability to a problem the enterprise has been sitting with for years.

Why Role-Based Validation Changes the Equation

One of the quieter failures of traditional technical skill assessments is the assumption that everyone can be measured the same way.

A cloud infrastructure engineer and a data pipeline engineer might both work in the same technology stack. But their decisions, priorities, and definitions of “done” are entirely different. Measuring them with the same instrument produces data that looks consistent but isn’t meaningful. It tells you how both performed on a generic test, not how either would perform in their actual role.

Nuvepro’s project readiness solutions include role-based assessment frameworks built to mirror the specific responsibilities of each function. A full-stack developer is evaluated on end-to-end execution across the stack. A cloud engineer is assessed on infrastructure judgment, configuration decisions, and failure handling. A data engineer is validated on pipeline design, integration quality, and data management thinking.

This matters not just for accuracy but for trust. When a delivery manager looks at a role-based readiness report and sees that someone has been validated specifically for the job they’re about to do – not for a generalised version of their domain – the confidence level is different. The decision to deploy becomes grounded in something real. That’s what AI-powered assessments calibrated to specific roles make possible.

The AI Infrastructure Behind the Validation

What makes EASE - Engine for AI-Based Skill Validation genuinely different from earlier attempts at automated assessment is the sophistication of its AI-based skill evaluation model and the consistency it brings at scale.

Manual evaluation has always struggled with two problems: subjectivity and capacity. A skilled human reviewer can assess a complex submission with nuance, but they also bring individual biases, vary in their standards from submission to submission, and can’t maintain consistency across thousands of assessments in parallel. Earlier automated systems solved the capacity problem but introduced new subjectivity of a different kind: rigid rubrics that penalised valid alternative approaches.

EASE – Engine for AI-Based Skill Validation’s AI-Driven skill evaluation model addresses both. It evaluates submissions in context, which means it can recognise when two different approaches to the same problem both reflect genuine competence – and when one reflects understanding while the other reflects guesswork that happened to work. It normalises outcomes across cohorts, geographies, and assessment conditions, so that a readiness rating in one team means exactly the same thing in another.

In a 2026 landscape where enterprise teams are distributed across multiple time zones and skilling programs run in parallel across dozens of cohorts, that consistency isn’t a nice-to-have. It’s what makes the data actually comparable.

From a Number to a Decision

One of the practical innovations in how EASE - Engine for AI-Based Skill Validation presents results is the translation of raw performance data into readiness signals that are actually useful for the humans who have to act on them.

Every submission is evaluated on a 0-10 performance scale, normalised into a percentage, and then mapped to a readiness grade. But these grades aren’t cosmetic categories – they’re calibrated to answer a specific operational question: what can this person be trusted to do, right now, without additional support?

An Outstanding or Excellent rating means genuine production readiness – the kind of performance that holds up when a client environment behaves unexpectedly. A Good rating signals strong execution with minimal guidance needed. In the middle of the scale, grades flag where structured support is still required before deployment makes sense. At the lower end, results indicate gaps that need targeted intervention before real work begins.

For L&D teams, this shifts the output of a skill assessment platform from data storage to operational intelligence. AI-powered talent evaluation – grounded in real execution rather than test scores – changes the conversation in the staffing meeting from “this person completed the certification” to “this person has been validated at a Good level for this specific role – here’s what that means for the project.”

That’s the shift enterprises have been waiting for. Not a better test. An actual answer.

What Changes in Practice and What the Data Shows

Enterprises that have moved to EASE - Engine for AI-Based Skill Validation powered project readiness solutions report changes across three areas that matter most to the business.

First, onboarding velocity improves. When you can identify from day one exactly who is ready to contribute independently versus who needs targeted support in specific areas, ramp-up timelines compress. You stop burning senior engineer time on problems that properly validated talent would handle without escalation.

Second, deployment confidence rises. The hesitation that delivery managers carry into project kickoffs – that quiet “I hope this team is actually ready” is replaced by something more concrete. Skills validated through real performance in conditions that resemble the actual job produce a different quality of confidence than skills inferred from course completions.

Third, the L&D function earns a different kind of seat in the business conversation. When AI-powered talent evaluation data is tied to actual readiness for deployment rather than learning activity metrics, learning leaders can speak directly to delivery outcomes. That changes how the function is perceived and resourced.

Research from Deloitte’s 2025 Human Capital Trends report supports this shift: organisations that invest in performance-based skill validation rather than completion-based credentialing report 31% higher confidence in workforce deployment decisions and measurably better outcomes on first-year project performance metrics.

The Quiet Revolution in How Readiness Gets Defined

There’s something worth sitting with in how this shift changes the human experience of skill development, not just the enterprise experience.

Under the old model, a professional could complete training, pass an assessment, and still feel a background hum of uncertainty about whether they were actually ready. That uncertainty is corrosive. It’s the thing that makes people hesitate when a production system goes down or defer to a senior colleague on a decision they should be equipped to make themselves.

Nuvepro’s EASE (Engine for AI-Based Skill Validation) powered AI-Powered Skill Assessment changes that dynamic. When you’ve been evaluated not on what you remembered in a test, but on what you actually did in conditions that looked like real work, the confidence you carry into a project is different. It’s earned confidence, not assumed confidence. And that difference shows up in how people perform when the stakes are real.

The Bigger Picture

In 2026, the enterprise skilling conversation has moved past the question of whether technology can improve assessment. It can, and EASE - Engine for AI-Based Skill Validation is evidence of that. Organisations that have embraced AI-powered talent evaluation are no longer asking whether their people are ready - they know. The more important question now is what it means for organisations that make the shift early and what it costs organisations that don’t.

The companies building skill validation infrastructure now – ones that can say with evidence who is ready, for what role, at what level of complexity are building a capability that compounds over time. Every deployment decision gets better informed. Every project kick-off starts from a more honest foundation. Every gap that gets identified early is a risk that gets addressed before it becomes a problem.

That’s not just better L&D. That’s a competitive advantage that is genuinely hard to replicate without investing in the underlying capability to validate skills the right way.

EASE – Engine for AI-Based Skill Validation is Nuvepro’s answer to the question enterprises have been quietly asking for years. Built on a foundation of AI-based skill evaluation, it doesn’t ask “how do we track more learning?” It asks the only question that matters: “how do we actually know who is ready?”

The answer, it turns out, isn’t a better scorecard. It’s a fundamentally different way of asking the question.

Learn more about Nuvepro’s EASE – Engine for AI-Based Skill Validation at https://nuvepro.ai/