Before a project is staffed, one critical question often gets ignored: Do we have the right, validated skills in place?

Not “are these people trained?” that is answered by the LMS. Not “did they pass the assessment?” that is answered by the certification report. The question that is almost never asked is the only one that actually determines what happens when the engagement goes live:

Can these people perform under real conditions when the environment does not cooperate, when the brief is incomplete, when the first approach fails, and when the client is watching?

That question has no answer in the data most enterprises are currently tracking. And that absence that blind spot at the centre of every staffing decision is what “certified but not ready” actually looks like before it becomes a problem on the ground.

In 2026, the enterprises that have closed this blind spot are not doing so through better training programmes or more rigorous tests. They are doing it through a fundamental shift in what they measure -moving from credentials and completion records to validated, evidence-grounded readiness. The engine making that shift possible is Nuvepro’s EASE – Engine for AI-Based Skill Validation.

What follows is not a case for changing how your organisation trains its people. It is a case for changing what you know about them once training is done.

Knowing that training happened is the beginning of a readiness story. It is not the end of one.

The Industry Signal That Keeps Being Ignored

A 2025 global workforce survey of over 56,000 workers across 50 countries found that 53% of employees said the skills required in their role had changed significantly over the past three years. Among that group, the majority had completed training to address the change. But only 4 in 10 said they felt fully equipped to apply new skills under actual work conditions.

Read that carefully. Training happened. Certification happened. And only four out of ten people who went through both felt ready to use what they’d learned when real work demanded it. For the remaining six, the training produced knowledge. It did not produce readiness.

6 in 10

Employees who completed skills training did not feel equipped to apply it under real work conditions. – Global Workforce Survey, 2025

This is not a finding about motivation or effort. The people in that survey were not disengaged. They completed the training. They sat the assessments. The gap is between what the assessment confirmed -that they had encountered and recalled the content -and what real work requires: the ability to operate with the content when the environment is live, the stakes are real, and there is no clean correct answer waiting at the end of a multiple-choice question.

A separate 2025 HR leaders survey adds a structural dimension: 58% of HR leaders said their organisations’ technical skill assessment tools could not reliably distinguish between theoretical familiarity with a skill and genuine applied competence. More than half of the people responsible for workforce readiness were operating tools that could not answer the readiness question they were being asked to solve.

These signals have been available for years. The organisations that took them seriously -and rebuilt their readiness measurement accordingly -are the ones quietly building the competitive distance.

What Counting the Wrong Thing Actually Costs

The financial case for fixing skill validation does not require a new model. It requires applying existing delivery data to a question it is rarely asked to answer.

When a technology engagement overruns its timeline, the post-mortem typically names complexity, scope change, or resourcing constraints. These are real factors. They are also, frequently, the story that an undetected skills gap talks about itself. The actual cause that the team was deployed at a readiness level below what the engagement required rarely surfaces in the formal record because the skills data said the team was ready. And the skills data is trusted even when the delivery outcome contradicts it.

A 2025 global employment analysis estimated that the cost of deploying talent without genuine role-specific validation contributes to an annual productivity loss of approximately $1.3 trillion across professional services, technology, and financial sectors worldwide. This is not the cost of bad training. It is the cost of the gap between training completion and actual deployment readiness going unmeasured and therefore unmanaged.

$1.3T

Estimated annual productivity loss from deploying talent without genuine role-specific validation. – Global Employment Analysis, 2025

At an organisational level, this shows up in ways that are rarely connected to their root cause: client accounts that renew but do not grow, delivery margins that compress on complex engagements, senior resources that are pulled into work that should have been handled at a more junior level, and talent pipelines that produce certified people who require more hand-holding than their credentials implied.

Changing what the organisation counts -from completions to validated readiness -is the first step toward recovering that cost. And the step that AI-Powered Skill Assessment makes operationally possible.

How the Shift from Credential Counting to Readiness Validation Actually Happens

The shift from credential counting to readiness validation is not theoretical. It is operational -and it follows a pattern that repeats across every organisation that makes it honestly.

It usually starts with a single uncomfortable question from a delivery leader or a client: can you tell us, specifically, that the people being deployed are ready for this engagement -not just that they are certified? Most talent functions, when asked that question directly, find they cannot answer it with the precision required. They can confirm training completion. They can share assessment scores. What they cannot provide is evidence of how those people perform when the environment is live and the stakes are real.

The organisations that have resolved this tension did not do it by running more training or raising certification thresholds. They changed what deployment readiness meant -moving the primary readiness gate from completion-based signals to performance-based ones. The distinction sounds subtle. In practice it is the difference between knowing that someone studied the map and knowing they can navigate the terrain.

What drives this shift, increasingly, is not just internal talent strategy. It is client expectation. Procurement leaders and engagement sponsors are asking for capability evidence -not just credentials -before engagements begin. The market is applying pressure from the outside that internal measurement systems are struggling to meet from the inside.

The buyer-side signal: A 2025 survey of enterprise technology buyers found that 61% of procurement leaders said they would pay a premium for a services partner that could provide verified capability evidence for their delivery team, not just certification records. The demand for proof is coming from clients, not just from internal talent strategy.

The organisations building the infrastructure to meet that demand -through AI-powered talent evaluation that goes beyond completion records -are not running experiments. They are responding to a market that has already moved.

The Validation Gap - What Traditional Assessments Miss vs. What EASE Sees

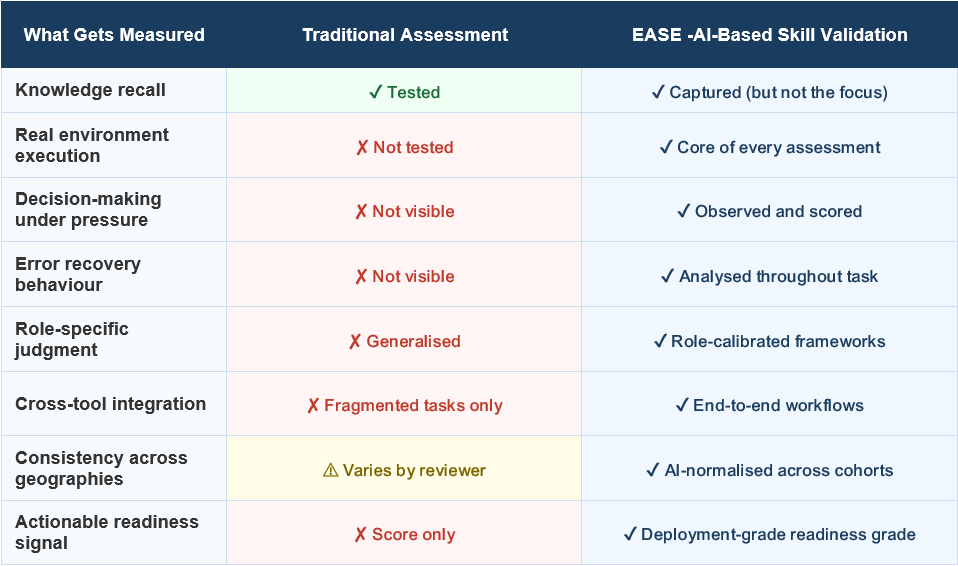

The table below maps what each approach actually measures and where the gap in deployment decisions lives.

The gap in the table above is not a gap in training quality. It is a gap in measurement depth. Traditional assessments were built to confirm exposure. AI-based skill evaluation built on EASE (Engine for AI-Based Skill Validation) is built to confirm readiness. Those are different instruments answering different questions. The first question – was this person exposed to the content? – has never been the one that determines delivery outcomes. The second one does.

Why the Fix Is a Measurement Decision, not a Training Decision

When organisations discover a readiness gap, the instinct is to add more training. More modules. More mandatory certifications. A higher pass threshold on the existing assessment.

This response is understandable and consistently insufficient.

The problem is not that people need more exposure to content. In most organisations, the training investment is already substantial. The problem is that after all of that exposure, the measurement system being used to confirm readiness cannot see into the part of capability that actually matters -what happens when someone takes that content and applies it in a live, ambiguous, high-stakes environment.

A 2025 study of enterprise talent functions found that organisations that invested in upgrading their skill validation methodology -moving from static assessment to performance-based evaluation -saw a 40% reduction in post-deployment skill gap incidents within two quarters, without any change to their underlying training content. The training was already doing its job. The measurement was not doing its job. Fixing the measurement fixed the outcome.

40%

Reduction in post-deployment skill gap incidents after upgrading to performance-based validation. – Enterprise Talent Function Study, 2025 -no change to training content required.

This is the nature of the measurement decision. It does not compete with training investment. It completes it. AI-Driven skill evaluation grounded in real-work performance data is the layer that turns training into evidence of readiness -the layer that was always missing and is now available.

What EASE -Engine for AI-Based Skill Validation -Measures That Nothing Else Can

The architecture of EASE -Engine for AI-Based Skill Validation starts from a different premise than traditional assessment. Rather than asking what a learner knows, it asks what a learner does -in a live environment, with real configurations, real integration points, and real consequences when something goes wrong.

Learners working through Nuvepro’s AI-powered assessments are not choosing from pre-set answers. They are executing. The environment they work in behaves like a real project scenario -with the ambiguity, the incomplete information, and the occasional system behaviour that documentation did not anticipate. The task is not to demonstrate knowledge. It is to deliver an outcome.

What EASE -Engine for AI-Based Skill Validation observes and analyses throughout that execution is the evidence that no final score can contain: the sequence of decisions made when the path was unclear, the approach to failure when something broke mid-task, the quality of judgment when two valid options led to different trade-offs, and whether the professional adapted intelligently or defaulted to familiar patterns that did not fit the problem. This is AI-Powered Skill Assessment operating at the depth that deployment decisions require.

The role-based framework ensures that what is being evaluated is relevant to the actual function. A cloud engineer’s readiness is assessed against the decisions, tools, and responsibilities of a cloud engineer -not a generalised technology competency profile. A data architect is evaluated on the judgment calls that data architecture requires. The readiness signal that comes out of the AI powered assessment is specific to the role, which means it is specific enough to inform the staffing decision.

The EASE Readiness Spectrum - From Score to Deployment Decision

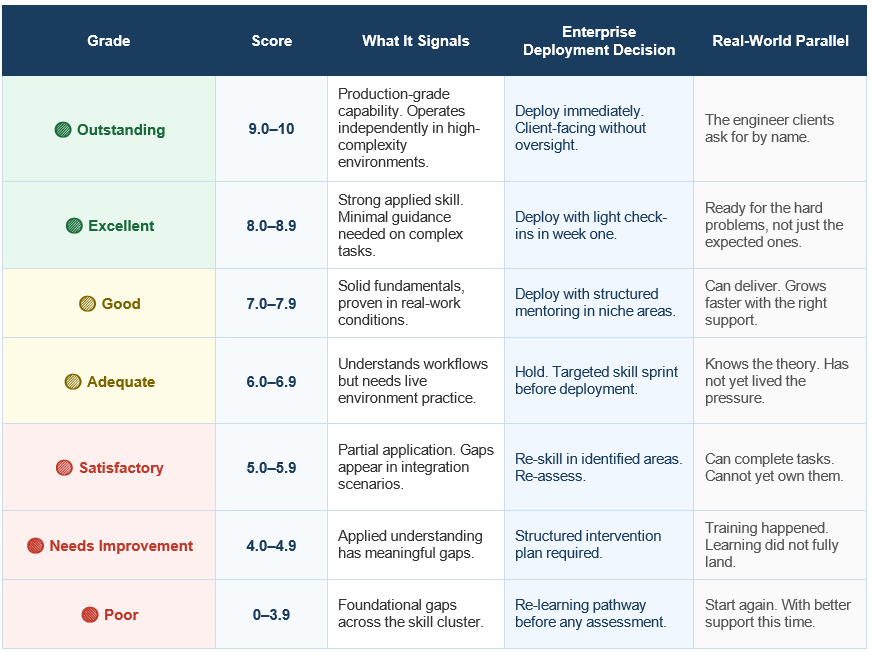

Every EASE AI driven skill evaluation assessment produces a readiness grade, not just a score. The table below shows what each grade means for the enterprise and the human behind it.

The readiness spectrum above is what changes the nature of the deployment conversation. Instead of a score that requires interpretation, the output of every EASE -Engine for AI-Based Skill Validation assessment is a specific, calibrated signal that answers the operational question directly: what can this person be trusted to do, right now, without a safety net? That specificity is what makes AI-powered assessments actionable rather than merely reportable.

The Question Every Talent Leader Should Be Able to Answer Any day

There is a simple test for whether an organisation’s skill validation infrastructure is doing its job.

When a delivery lead needs to know whether a particular team member is ready for a particular client engagement, can the talent function answer that question -specifically, by role, by skill cluster, by readiness grade in under thirty minutes?

In most organisations, the answer is no. The available data can confirm that the person completed training and passed an assessment. It cannot confirm whether they are ready for the specific demands of the specific engagement. The delivery lead either trusts the credential and moves forward or spends days gathering context through informal channels to make a decision that the system should be able to answer directly.

The organisations that have built AI-powered talent evaluation infrastructure on top of performance-based validation can answer that question. They know, by role and readiness grade, who is deployment-ready right now. Their staffing decisions are made in hours rather than days. Their confidence is grounded in evidence rather than credential inference. And their delivery outcomes reflect the difference.

The value of knowing who is ready is not just in the decision that gets made. It is in the decisions that do not have to be unmade three weeks into an engagement when the gap surfaces on its own.

What Your Enterprise Is Still Counting and What to Count Instead

The completion rate is not going away. It remains a useful operational metric for tracking training activity. But it is not a readiness metric. It has never been a readiness metric. And in an enterprise environment where the complexity of delivery has outpaced the sensitivity of traditional assessment, treating it as one has a cost that is now large enough to be strategic rather than merely operational.

The organisations building a real competitive advantage in talent readiness are not the ones running more training. They are the ones who have changed what they count after training ends, shifting from “who completed” to “who is validated, at what level, for which role, right now.”

EASE -Engine for AI-Based Skill Validation produces that count. Through AI-based skill evaluation that analyses real execution in real environments, it turns the readiness question from a gap in the data into a specific, trustworthy, role-calibrated answer.

The question is not whether your competitors have figured this out. Some of them have. The question is how much longer the distance between what they know about their workforce and what your organisation knows about yours will take to close.

The count that determines your delivery outcomes is not on your current dashboard. It is available. It is specific. And it is the one worth building toward.

Discover what EASE -Engine for AI-Based Skill Validation reveals about your workforce’s true readiness @ www.nuvepro.ai