It’s a conversation that keeps coming up. In leadership meetings, at industry events and even at the quick chats post AI sessions.

“So… are we supposed to be worried about our jobs? Or excited?”

It’s a fair question.

And the honest answer, the one that rarely gets said out loud is: both. At the same time. For different reasons.

Because here is what is actually happening, beneath all the noise around AI, underneath all the product launches and strategy decks and breathless predictions about what AI will or won’t replace:

AI is not on a straight path toward replacing human work. And it is not simply a tool that makes human work faster. It is doing something far more layered than either of those narratives suggest.

It is quietly reshaping what work actually is pulling certain tasks away entirely, pushing others deeper into areas that require human judgment, and creating a new kind of interdependence between what machines do well and what people do better.

This is exactly why many organizations are beginning to invest more deliberately in enterprise AI training programs not just to introduce tools, but to help teams understand how work itself is being restructured, and where they fit into that shift.

Because the real change is not about replacing roles. It is about redefining them.

What’s Actually Changing in the Way We Work

Most roles aren't going away. They're being hollowed out from the inside and what's filling that space is far more valuable than what's leaving.

Some responsibilities are becoming fully automated. Others are expanding, requiring more context, creativity, and decision-making than before. And in between, a new layer of work is emerging, where humans and AI systems collaborate, hand off tasks, and build on each other’s strengths.

For AI for business professionals, this creates a different kind of expectation. It is no longer enough to simply “use” AI tools. The real value comes from knowing when to rely on them, where to step in, and how to guide the outcomes they produce.

What we are seeing is not a linear transition. It is a redistribution of work.

And that redistribution is exactly what organizations are now trying to understand more clearly.

The research puts it this way: automation and augmentation are not opposites. They feed each other. When AI takes over the tasks it can handle – the structured, repeatable, codifiable ones- it creates space for humans to do more of the work that machines genuinely cannot: the judgment calls, the relationship decisions, the moments that require ethics and context and the kind of wisdom that does not compress into a prompt.

Which means the question is not “will AI replace us?”

The question is: does your organisation know, specifically and deliberately, which work it is handing to AI, and which work it is protecting for the people in the room?

Most don’t. Not yet. And that gap between having the tools and knowing what to do with them is exactly where the real work of AI transformation lives.

AI does not replace human intelligence. It changes what human intelligence is needed for. That shift is the opportunity if you’re clear enough about what you’re doing with it.

Why Most Enterprise AI Training Programs Stall at the Same Point

There is a pattern that repeats across almost every large organisation that has invested seriously in AI adoption.

Phase one goes well. Leadership aligns. AI Tools are selected. A cohort of enthusiastic early adopters demonstrates what is possible. The business case looks strong. Phase two is where it stalls. The rollout hits the broader workforce, and something quiet happens: people use the tools for the tasks they were already comfortable with and route around them for everything else. The tool becomes one more tab open in the browser rather than a fundamental change to how the day works.

The reason is almost never resistance. People are not refusing AI. They are uncertain about it in a specific, practical way: they do not know which parts of their job they are still supposed to own and which parts the technology is now responsible for. Without that clarity, the safest thing to do is keep doing what they know. And so, they do.

12-18%

Average AI tool adoption rate in enterprise organisations, despite full licensing. – Global AI Implementation Study, 2025

Most enterprise AI training programs address the wrong layer of this problem. They teach people how to use the tools. They do not teach people how the work has changed. There is a significant difference between knowing how to write a prompt and knowing which decisions in your role you are now expected to make with AI input and which ones you are expected to make without it.

The framework that closes this gap is not a training curriculum. It is a classification. Before any training is designed, the work itself has to be mapped – every task in every role sorted into one of three categories: automate, augment, or human-only. That map is what gives training its direction. Without it, even the best generative AI training for employees produces people who know the tool but do not know where to use it.

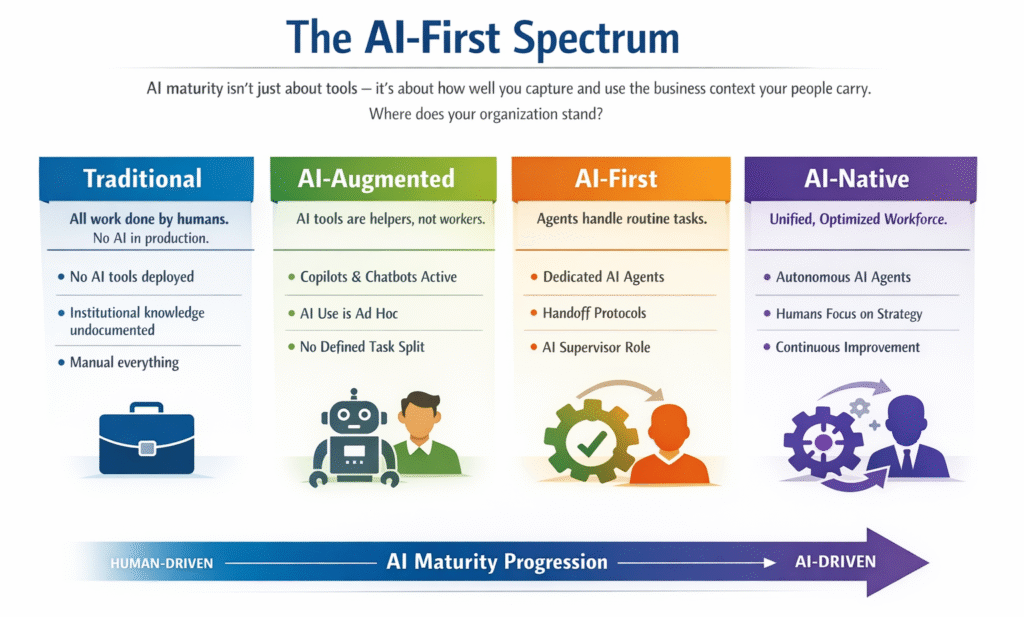

The AI-First Spectrum: Four Stages, and the Honest Question of Where You Actually Are

Before deciding where to go, it is worth being honest about where you are. AI maturity exists on a spectrum, and most organisations overestimate their position on it.

At the start of the spectrum is Traditional – all work done by humans, no AI in production. Knowledge lives in people’s heads and is rarely documented. This sounds like a distant starting point, but for many departments within large organisations, it is still the accurate description.

The next stage is AI-Augmented – tools are live, Copilot licences are active, chatbots are deployed. But humans are still doing the same work in essentially the same way. AI is a helper that sits alongside existing workflows rather than a structural change to them. This is where the 12–18% adoption problem lives. The tools are there. The work division is not.

The third stage is AI-First – and this is where genuine transformation begins. Work is divided. Agents own specific, defined tasks. Humans supervise and own the work that requires their expertise. Handoff protocols exist between what the agent produces and what the human decides. New roles(AI supervisors, agent coordinators) start to appear in the organisation chart.

The fourth stage is AI-Native – agents and humans operate as a unified workforce. The division of work is continuously refined by real performance data. Humans focus on strategy, exceptions, and the high-stakes decisions that require human accountability. The organisation gets faster every quarter not because it adds more tools but because the operating model optimises itself.

Most organisations that believe they are AI-First are AI-Augmented. The test is simple: can you name which specific tasks agents own, who supervises the handoff, and how you measure whether it is working? If not, the tools are live, but the transformation is not.

Moving from AI-Augmented to AI-First requires something that cannot be purchased in a software licence: the capture of business context. The institutional knowledge of how work actually gets done – the judgment calls, the exceptions, the things that are never in the documentation has to be surfaced, mapped, and used to design the new operating model. That is the work that determines whether AI transformation delivers or stalls.

AI for Business Professionals: What the New Division of Work Actually Looks Like

The most useful thing any leadership team can do right now is get specific.

Abstract conversations about AI transformation rarely produce traction. What produces traction is a task-level map of a single job role in a single department – every task that person does in a week, sorted into one of three piles: tasks that agents can own end-to-end, tasks that agents can assist with while the human makes the final call, and tasks that require human judgment that no agent should be trusted with.

When that map exists, something shifts. The work becomes concrete. The questions get answerable.

For AI for business professionals – the finance managers, HR leaders, legal associates, and customer success managers who are not engineers but whose roles are being restructured by AI, this level of specificity is what makes training meaningful. HR, legal, marketing, software development, customer success – every department has its own version of this map. And in every department, the map looks different. Which is exactly why one-size-fits-all enterprise AI training programs rarely moves the needle.

The question is not whether your workforce is ready for AI. It is whether you have given them enough clarity about the new work to be ready for anything.

AI Training for Non-Technical Employees: Why Hands-On Labs Change What Training Can Do

Once the task map exists, training has a target. And the kind of training that actually builds the readiness the new operating model requires is not a video course or a knowledge check. It is practice in conditions that resemble real work.

Consider what the new operating model actually asks of a non-technical employee. A talent specialist whose resume screening task is now handled by an agent does not need to know how the model works. They need to know how to validate the output- how to catch the cases where the agent missed something, how to handle the exception that falls outside the agent’s guardrails, and how to make the final call with confidence when the recommendation is borderline.

That capability cannot be built by watching a demonstration. It is built by doing, by working through simulated scenarios where the agent produces an output and the human has to evaluate it, challenge it, or act on it. This is what AI training for non-technical employees needs to look like if it is going to produce people who can actually operate the new model, not just describe it.

An AI sandbox training platform, a live environment where employees can work through realistic agent interactions, practise handoff decisions, and build judgment about when to trust AI output and when to override it is the infrastructure that makes this kind of training possible. It is the difference between training people on AI and training people with AI.

What hands-on looks like in practice: In Nuvepro’s GenAI Sandbox environments, employees work through real task simulations – validating agent outputs, managing handoffs, handling exceptions. The competency being built is not AI awareness. It is AI supervision: the specific judgment that the new operating model depends on.

AI training with hands-on labs for enterprises changes the outcome of skilling investment in a way that passive training cannot. People leave knowing what to do differently, not just knowing more about AI. That is the distinction that shows up in adoption rates, in task execution quality, and in the confidence with which people operate a model that is genuinely new to them.

AI-Powered Skill Assessment: How You Know If the New Operating Model Is Actually Working

Training without validation is hope with a timeline.

Once the task map is defined and the training is designed, the question that determines whether any of it translates into real operating model change is the same question that determines whether any skill development programme works: can the people who went through it actually do what the new model requires?

That question cannot be answered by a completion certificate. It cannot be answered by an assessment that checks whether someone remembers the module content. It can only be answered by observing someone do the work specifically, the new work. Validating an agent-output, executing a handoff correctly, making a sound judgment call in an augment scenario where the AI has produced a recommendation that needs human review.

This is what AI-Powered Skill Assessment through EASE — Engine for AI-Based Skill Validation means in this context. Not a test of AI literacy. A validation of the specific competencies the new operating model requires: AI output validation, exception handling, handoff protocol design, strategic oversight, and the contextual judgment that determines whether a human is genuinely supervising an agent or just rubber-stamping its outputs.

Nuvepro’s readiness scorecard assesses workforce readiness across five dimensions: AI literacy, tool proficiency, workflow integration, critical thinking, and adaptability. Each of these maps directly to a capability the new operating model depends on. And each of them, when measured through AI-Driven skill evaluation in realistic task scenarios rather than standardised tests, produces a readiness signal that is specific enough to act on.

67%

Of enterprise AI implementations that underperformed cited workforce readiness as the primary gap, not the technology. – Enterprise AI Implementation Analysis, 2025

The readiness data shapes everything downstream: which roles are ready for deployment now, which need a targeted sprint before going live, and where the gaps are specific enough to address in weeks rather than months. AI-based skill evaluation grounded in real task performance is what turns a transformation roadmap from a document into a decision.

EASE - Engine for AI-Based Skill Validation: The Infrastructure That Makes the Transition Real

There is a three-phase rhythm to how the transition from AI-Augmented to AI-First actually works in practice. And each phase depends on a form of intelligence that passive observation cannot provide.

The first phase is mapping. Not at the organisational level, not at the departmental level, but at the task level for one specific job role in one specific department. Every task that role performs is mapped and classified: automate, augment, or human-only. The hours currently spent on each task are documented. The hours that will be freed when agents take over the automatable ones are projected. The competencies the human will need to supervise the augmented ones are specified. This is the picture that makes every subsequent decision concrete rather than aspirational.

The second phase is designing the split. Leadership reviews the classification and signs off. The readiness plan is built around the new responsibilities, what the role looks like after the division is made, what new skills it requires, and how those skills will be developed and validated before deployment. This is where AI-powered talent evaluation infrastructure matters most: without a way to assess whether people are genuinely ready for the new operating model, the deployment decision is made on assumption.

The third phase is deployment and measurement. The new operating model goes live for the chosen role. Performance is tracked. Handoff protocols are tested against real task conditions. The validated competencies are confirmed in the operating environment, not just in training. Results are measured – time saved, quality improved, cost reduced. Once proven for one role, the model expands to the next role, then the next department.

EASE — Engine for AI-Based Skill Validation sits across all three phases. It provides the AI-powered assessments that confirm readiness before deployment, the task-level validation data that identifies where gaps exist before they become delivery problems, and the normalised readiness signals that make it possible to compare readiness across roles, departments, and geographies without the inconsistency that human evaluation introduces at scale.

What this produces is not a dashboard of completion rates. It is a deployment-grade readiness picture: specific to the role, specific to the task, specific to the competency the new operating model requires. The decision to deploy is made on evidence, not optimism.

The transition from AI-Augmented to AI-First is not a technology decision. It is a readiness decision. And readiness decisions are only as good as the data behind them.

The Leadership Decision That Changes Everything

Every AI transformation programme eventually reaches a moment where the strategic framing has to give way to something more operational. The workshops are done. The vision is aligned. The tools are procured. And now the question is: where, exactly, do we start? Which role? Which department? Which tasks?

The answer that produces results is always the same: start with the map. Pick one job role in one department. Spend a week mapping every task that role performs. Classify each one. Quantify the time. Identify the gaps. Design the readiness plan for the new responsibilities. Validate the competency before the model goes live. Measure the outcome.

Then do it again for the next role. Then the next department. The model compounds. Each iteration produces a more precise picture of how the organisation’s workforce relates to AI and each deployment builds the institutional confidence that the next one will also work.

This is how organisations move from AI-Augmented to AI-First without the organisation-wide disruption that large-scale rollouts almost always produce. Not a big bang. A sequence of proven transitions, each one building on the evidence the previous one generated.

The practical starting point: Nuvepro’s Task Intelligence Audit takes one job role in your target department and maps it completely in one to two weeks: every task classified, hours saved projected, readiness gaps identified, validation standards defined. You see the picture clearly before committing further budget or broader rollout.

The organisations that will look back on 2026 as the year they genuinely became AI-First are not the ones that licensed the most tools or trained the most people. They are the ones that started with the hardest and most specific question: which task, in which role, is ready to change today and do we have the evidence to prove our people are ready to operate when it does?

You Already Have What You Need. You Are Missing the Map.

The business context that makes AI transformation work is not something you have to create. It already exists. It lives in the knowledge your people carry about how the work actually gets done - the judgment calls, the exceptions, the things that are never in the process documentation but always in the room when a difficult decision gets made.

What most organisations are missing is not capability or ambition. It is the infrastructure to capture that context, map it against what AI can and cannot own, and build the readiness that the new operating model genuinely requires, not the readiness that a completion certificate implies.

EASE – Engine for AI-Based Skill Validation is that infrastructure. Through AI-based skill evaluation grounded in real task performance rather than test recall, it produces readiness signals that are specific enough to make deployment decisions on, transparent enough to share with the people being deployed, and consistent enough to trust across every role and every geography the transformation touches.

Automate. Augment. Stay Human. Three categories. One map. And the infrastructure to know, before deployment, whether your people are ready to operate inside it.

The map is available. The tools to validate readiness against it are available. The only thing that changes the outcome now is the decision to start with the right question, not “how do we adopt AI?” but “who owns what now, and do we have the evidence to prove our people are ready for that?

At this stage, the question isn’t whether AI fits into your organisation. It’s whether your people know how to work with it. That’s where enterprise AI training programs need to evolve towards AI training with hands-on labs for enterprises that reflect real decisions and real work. Nuvepro operates in that space, helping bring Automate. Augment. Stay Human. to life in practice. Explore more at www.nuvepro.ai